My takeaway series follow a Q&A format to explain AI concepts at three levels:

Anyone with general knowledge can understand them.

For anyone who wants to dive into the code implementation details of the concept.

For anyone who wants to understand the mathematics behind the technique.

The Concept of Activation Function

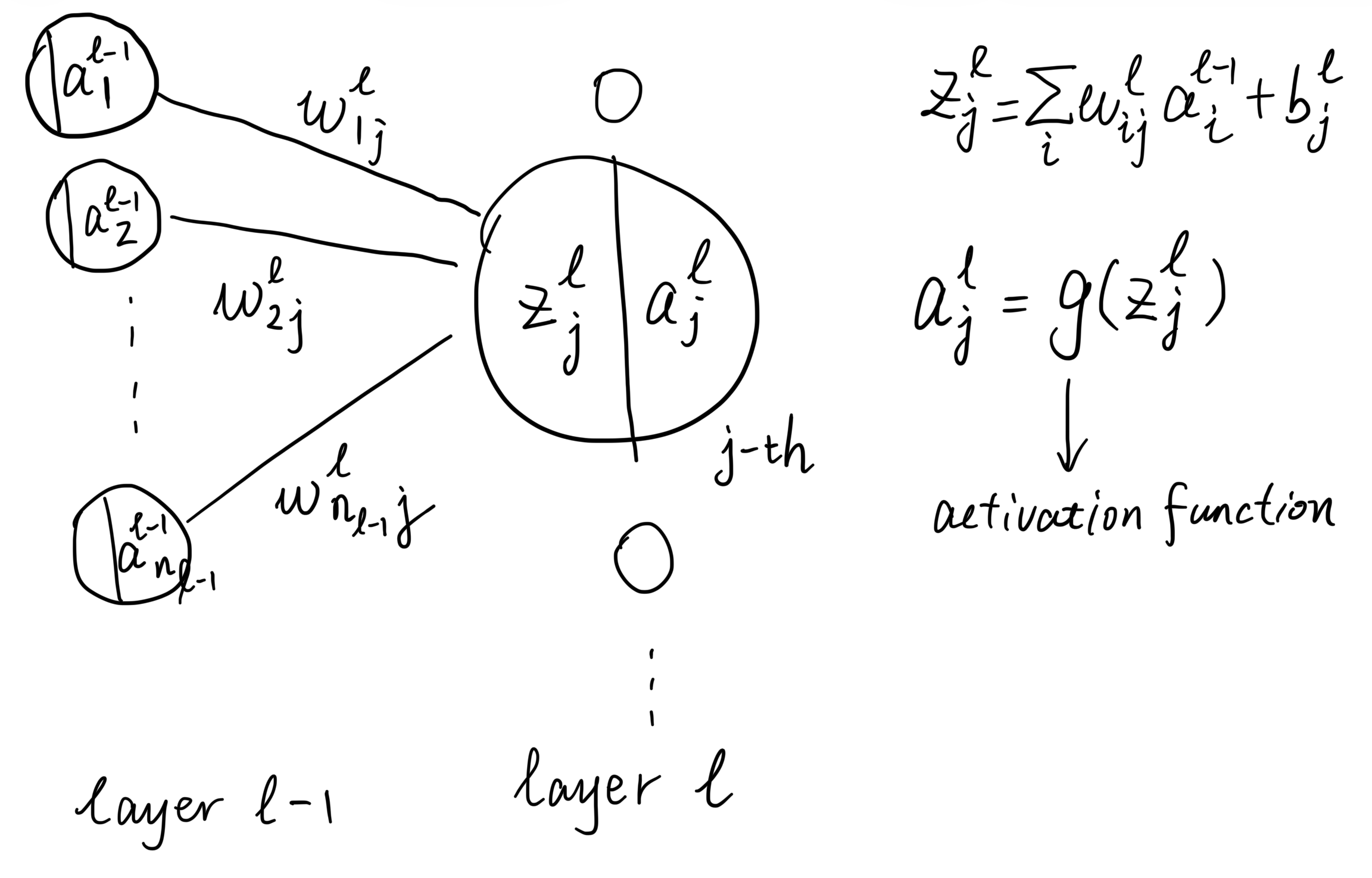

The activation function is a fundamental component of neural networks. The neural network has multiple layers of interconnected nodes (neurons), and each neuron processes input data and passes the result to the next layer. An activation function is a mathematical operation applied to the output of a neuron in a neural network.

The activation function is attached to each neuron in a neural network. The neuron receives a weighted sum of inputs from the previous layer, and the activation function processes this sum to produce an output:

For the activation function

- The weighted sum of inputs to the neuron:

Output:

- The activated output of the neuron:

Activation functions introduce non-linearity into the model, allowing it to learn complex patterns in the data. Imagine if there were no activation functions in a neural network; it would essentially be a linear model.

Activation Functions

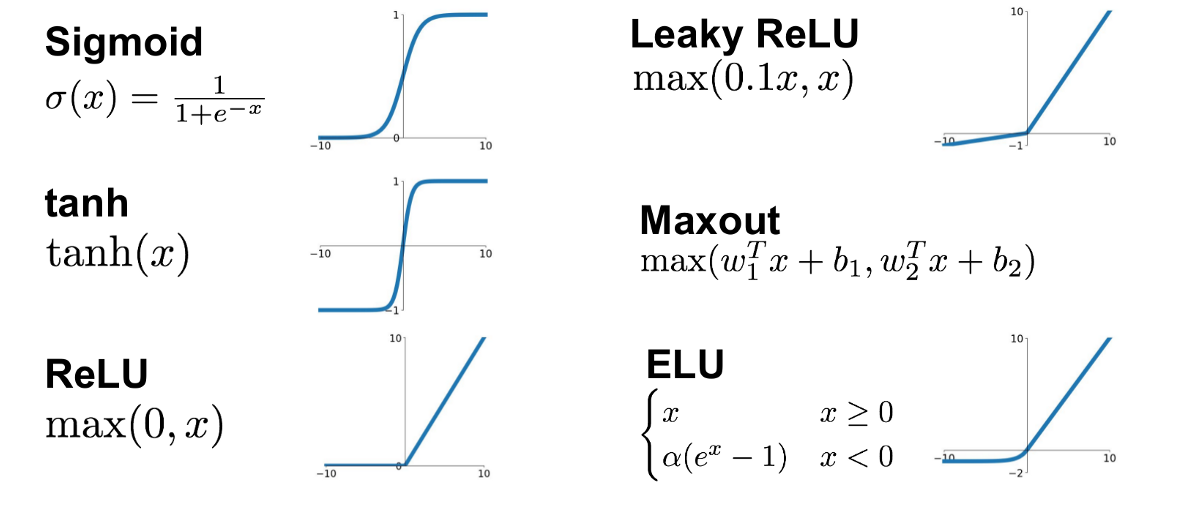

Common activation functions include:

According to the literal meaning of “activation”, the binary step function seems to be a good choice, as it clearly defines whether a neuron is activated (output 1) or not (output 0). However, it cannot be differentiated at the step point, and its derivative is zero elsewhere. This makes it unsuitable for backpropagation, which relies on gradients to update the parameters during training.

Sigmoid

Sigmoid function is a mathematical function that maps any real-valued number into the range (0, 1). It has an “S”-shaped curve and is defined by the formula (Please note the English word “sigmoid” means “S-shaped”):

Sigmoid function is the smooth version of the binary step function, making it differentiable for the backpropagation to work properly.

Pros:

- It maps input values to an output range between 0 and 1 (like a probability), making it suitable for binary classification tasks.

- Smooth gradients, which helps in optimization.

- Very much biologically plausible, as it mimics the firing rate of biological neurons.

Cons:

- Prone to vanishing gradient problem, which can hinder the training of deep networks.

- Outputs are not zero-centred. The zig-zag phenomenon can occur during optimization, which can lead to inefficient weight updates during training.

- Computationally expensive due to the exponential function.

The backpropagation computes the gradient of the loss function with respect to each layer’s parameters from the last layer backwards. The chain rule is applied, which means the gradients are multiplied by derivatives layer by layer. From the computation graph of neural network, we can see that the gradients are multiplied with the weights

For some activation functions like Sigmoid and Tanh, their derivatives

- The derivative of the sigmoid function is

- The derivative of the tanh function is

When this small values multiply the gradients during backpropagation for multiple layers, the gradients can become very small, which stops the network from learning further. This is known as the vanishing gradient problem. The more layers there are, the more severe the problem can be; the closer to the input layer, the more serious the vanishing gradient becomes.

From the computation graph of neural network, we can see that the path from the loss to a parameter (such as

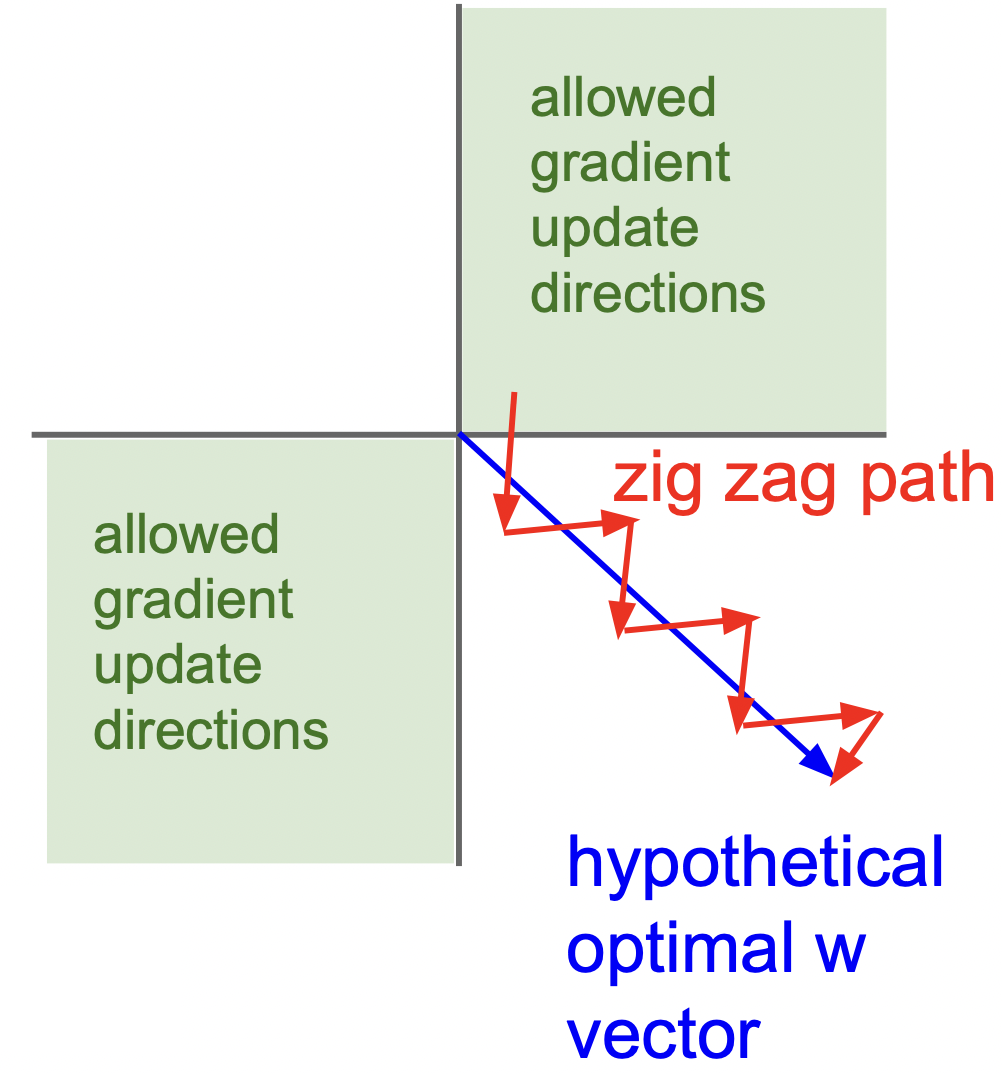

Suppose the activation function of the previous layer is Sigmoid, whose output is always positive. Then, for all parameters connecting to a neuron in the current layer (i.e.,

As a result, the parameters are divided into groups. For the parameters in the same group, they will always be updated in the same direction (either all increase or all decrease). This limits the freedom of parameter updates.

We illustrate a group in a 2D space below. Since the gradients have the same sign, the parameters will be updated either to the top-right or bottom-left. If the optimal solution happens to be the bottom-right direction (which is more likely in high-dimensional space), the zig-zag phenomenon occurs.

The optimal solution can be reached much faster if the parameters can be updated in the bottom-right direction. Therefore, zig-zag phenomenon can lead to inefficient parameter updates during training.

In the above zig-zag phenomenon, we see that the problem arises because the activation function’s output is always positive. If the activation function can produce both positive and negative outputs, the gradients of parameters connecting to a neuron in the current layer can have different signs. This allows for more flexible parameter updates.

The most ideal case is that the activation function can output both positive and negative values at the same odds. This would require the activation function to be zero-centred. Zero-centred activation function makes it more likely for the gradients of parameters in the same group to have different signs, thus reducing the zig-zag phenomenon and improving training efficiency.

Sigmoid is one of the earliest activation functions used in neural networks. It was widely used in 1980s’ perceptrons and multi-layer perceptrons (MLP). It was no longer popular after the 2010s due to its drawbacks.

Tanh

Tanh function is a mathematical function that maps any real-valued number into the range (-1, 1). It also has an “S”-shaped curve:

Tanh is a scaled and shifted version of the Sigmoid function, making it zero-centred:

Tanh inherits most of the advantages and disadvantages of Sigmoid, except it is zero-centred, making zig-zag phenomenon less severe.

Tanh as improvement of Sigmoid was also widely used in 1980s’ perceptrons and multi-layer perceptrons (MLP). It is also used in some recurrent neural networks (RNNs) such as LSTM and GRU.

ReLU and Its Variants

ReLU (Rectified Linear Unit) is an activation function defined as:

The logic of ReLU and its derivative is simple: a neuron has two states—active or inactive.

- Active (

- Inactive (

This highlights ReLU’s simplicity: it either lets information flow or blocks it completely.

Although the ReLU function is not differentiable at 0, it is still usable in backpropagation because we can define the gradient at that point. In practice, we often set the gradient to either 0 or 1 at

As for the binary step function, it is not differentiable at the step point, and its derivative is zero elsewhere. This makes all gradients zero basically, preventing any learning from occurring. That is the main problem.

Pros:

- No vanishing gradient problem in the positive part;

- Simple function, very efficient to compute.

Cons:

- Outputs are not zero-centred. The zig-zag phenomenon can occur during optimization, which can lead to inefficient weight updates during training.

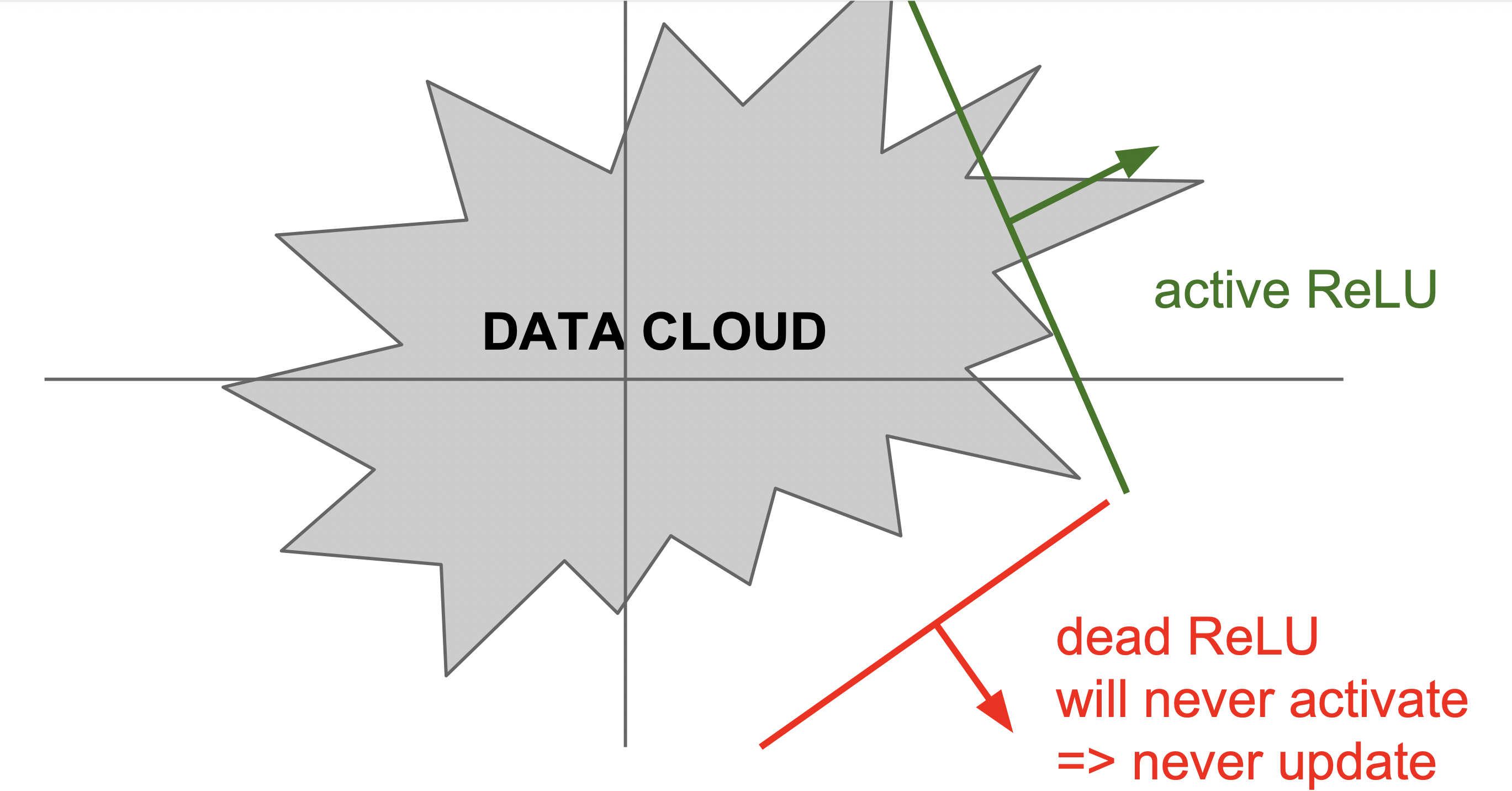

- The “dying ReLU” problem: Neurons can be “dead” if the activation is negative, making the parameters connected to it not updated.

From the computation graph of neural network, we can see that the path from the loss to a parameter (such as

ReLU function maps all negative inputs to zero. This means that the gradients of all parameters connected to this neuron will be multiplied by zero during backpropagation, resulting in zero gradients. The derivative of the ReLU activation function is also zero for negative inputs. This is multiplied in the chain rule as well, thus also results in zero gradients. Consequently, these parameters will not be updated during training.

Statistically, there is a 50% chance for a ReLU neuron to be “dead” (i.e., its input is negative) for any given input. It means that, on average, half of the parameters cannot be updated in each training step. This can affect the efficiency of training.

This is even a risk that some neurons may never be activated during the entire training process, leading to a portion of the network being undertrained or not trained at all.

We assume a ReLU neuron receives two inputs from the previous layer, with weights

A bad and unstable optimizer (for example, an optimizer with a huge learning rate) may produce such weights and bias, making the neuron completely dead.

Please note, the data cloud in a layer is the representation of the original input data, not the original input data itself. Therefore, it is not fixed and can change during training, so there is still a chance for the neuron to be re-activated in the future. For further analysis, please refer to (Lu et al. 2019).

ReLU was first proposed to be used in neural networks by (Glorot, Bordes, and Bengio 2011). It is then used in the famous AlexNet convolutional neural network, significantly improving training speed and winning the ImageNet competition.

This led to the rapid popularization of ReLU in the deep learning community. Since then, almost all convolutional neural networks (CNNs) and most deep neural networks (DNNs) have adopted ReLU or its variants.

ReLU has several variants designed to address its drawbacks:

- Leaky ReLU: Allows a small, non-zero gradient when the unit is inactive (i.e.,

where

Parametric ReLU (PReLU): Similar to Leaky ReLU, but the slope for negative inputs is learned during training.

Exponential Linear Unit (ELU): Like ReLU, but it has a smooth exponential curve for negative inputs:

Leaky ReLU, PReLU, and ELU are designed to address the “dying ReLU” problem. There are small gradients for negative inputs, allowing the parameters to be updated even when the neuron is inactive.

The pros and cons of these variants are similar to ReLU, with the added benefit of mitigating the “dying ReLU” problem. In addition, ELU uses an exponential function for negative inputs, which can introduce additional computational overhead compared to ReLU and Leaky ReLU.

ReLU variants are proposed after ReLU became popular:

- Leaky ReLU was proposed by (Maas et al. 2013).

- PReLU was proposed by (He et al. 2015).

- ELU was proposed by (Clevert, Unterthiner, and Hochreiter 2015).

Most people still use ReLU due to its simplicity and efficiency. However, in some cases, especially when training very deep networks, these variants can provide better performance.

Maxout

Maxout is simply a function that takes the maximum value from multiple inputs:

Maxout can aggregate multiple neuron activations from the previous layer. It simply takes the maximum of multiple neuron activations. The number of inputs

Maxout is sort of a generalization of ReLU. ReLU can be seen as a special case of Maxout with two inputs: the input itself and zero. Therefore, Maxout can be viewed as a more flexible activation function that can learn to select the most relevant features from multiple inputs.

Pros:

- Maxout can approximate any convex function, making it more flexible than ReLU or Sigmoid.

- Solves “dying ReLU” problem, since it doesn’t zero out all negative inputs, neurons don’t get stuck inactive.

Cons:

- More parameters: Each Maxout neuron equals

Maxout was proposed in 2013 by (Goodfellow et al. 2013). It is rarely used in modern architectures, because ReLU and its variants (Leaky ReLU, GELU) are simpler and often perform just as well.

The activation function forms the backbone of a neural network. The choice of activation function can significantly impact the performance of a neural network.

In general practice, ReLU and its variants (Leaky ReLU, GELU) are the most commonly used activation functions in modern neural networks due to their simplicity and effectiveness. Sigmoid and Tanh are less commonly used in hidden layers but may still be used in output layers for specific tasks (e.g., Sigmoid for binary classification). If there is no specific reason to choose another activation function, ReLU is usually a safe and effective choice.