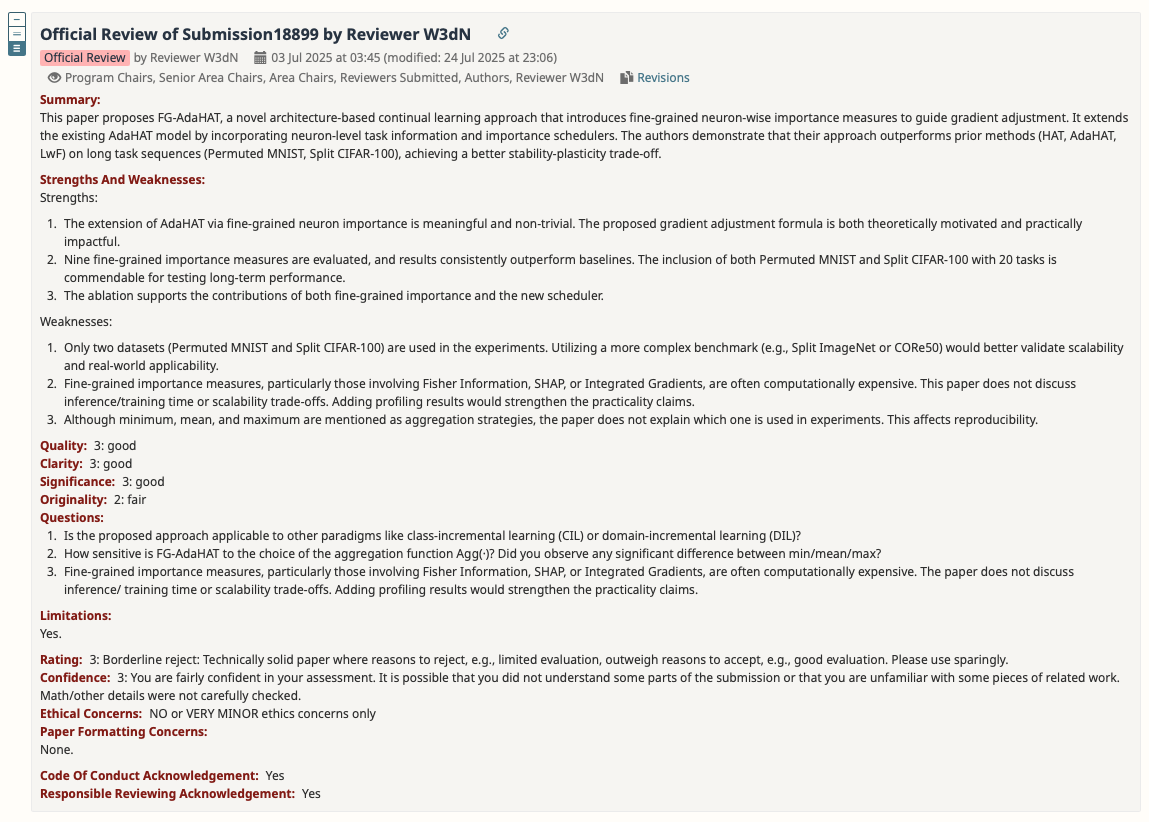

1 Reviewer W3dN

1.1 Rebuttal

Thanks for your valuable comments! Here are our responses to your proposed weaknesses and questions.

Response to weakness 1:

We had already conducted a third dataset called Combined-20. Please find it in supplemental material section B. Combined-20 is a much more complex and realistic benchmark.

There are mainly three ways to construct continual learning datasets: permute the pixels, split by class, and combine different dataset sources. Combined-20 represents the third way by combining 20 distinct datasets commonly used in machine learning, each forming a separate task. The 20 datasets are: CIFAR-10, CIFAR-100, MNIST, SVHN, Fashion-MNIST, TrafficSigns, FaceScrub, NotMNIST, EMNIST Digits, EMNIST Letters, Arabic Handwritten Digits, Kannada-MNIST, Sign Language MNIST, Kuzushiji-MNIST, Food-101, Linnaeus 5, Caltech 101, EuroSAT, DTD, Country 211.

Response to weakness 2 and question 3:

We had stated the training time of our experiments in supplemental material section F, part “Training Details”. The experiment setting and very detailed information of the environment where we run the codes, including GPUs, Python package versions (PyTorch, Captum), seeds, etc, are provided in the supplemental material so this training time information is guaranteed to be practical and reproducible.

In our statistics, FG-AdaHAT using most of our importance measures takes reasonable hours to train in our practices, but there is one exception: Feature Ablation. The Feature Ablation significantly exceeded the time budget under the same experimental conditions. However, this Feature Ablation is a very vanilla method to calculate neuron importance. There are many alternative methods to use.

We will organise them into more detailed profiling results in the camera ready version.

Response to weakness 3 and question 2:

We had included analysis of the aggregation strategies in supplemental material section G (Hyperparameter Study). Please scroll down in the supplemental material and this content is at page 9 just above the references.

For the sensitivity of the aggregation strategy, our analysis had stated that minimum consistently performs best across all settings, while the others perform similarly. We had also provided possible reasons for this phenomenon in the analysis.

We had stated that we use the minimum aggregation strategy for all experiments in supplemental material section F, part “Hyperparameters”.

Response to question 1:

Unfortunately, the FG-AdaHAT is based on HAT architecture. HAT architecture is applicable to task-incremental learning (TIL) only. The HAT architecture requires task ID information of the input data to choose the corresponding mask, which only TIL can offer [39]. In fact, most similar architecture-based approaches require task information preemptively, which makes them inapplicable to other scenarios as well.

References:

[39] Joan Serra, Didac Suris, Marius Miron, and Alexandros Karatzoglou. Overcoming catastrophic forgetting with hard attention to the task. In International conference on machine learning, pages 4548–4557. PMLR, 2018.

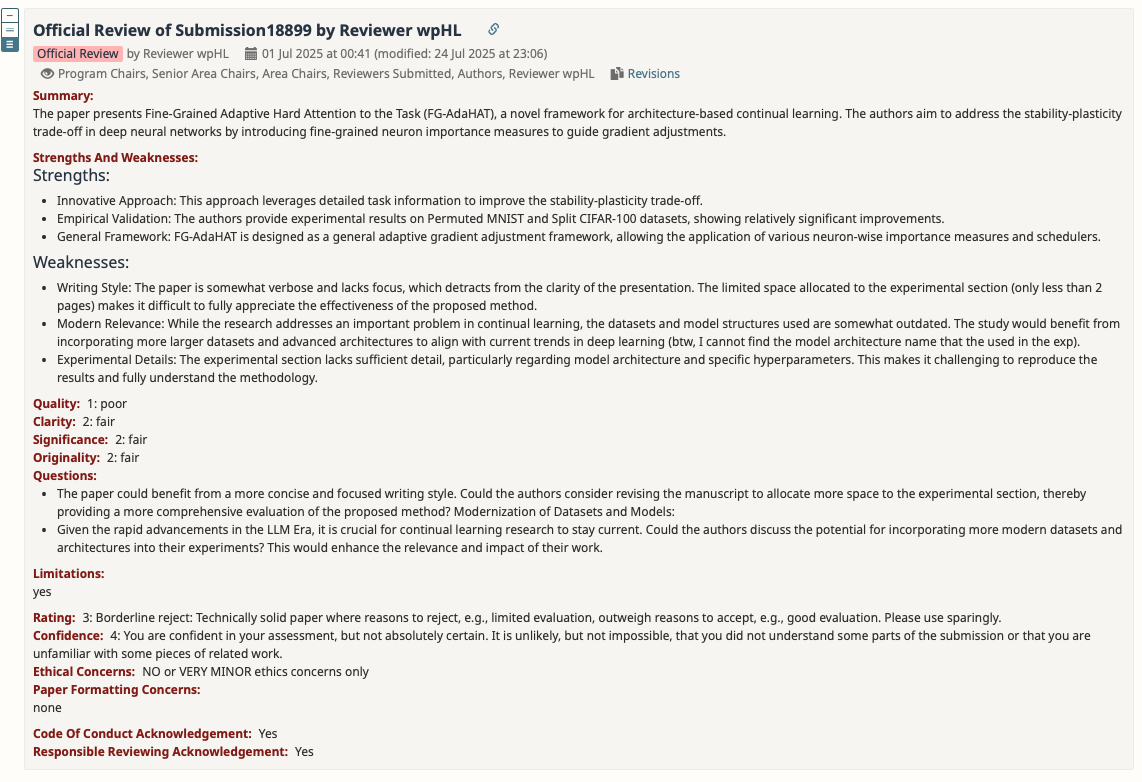

2 Reviewer wpHL

2.1 Rebuttal

Thanks for your valuable comments! Here are our responses to your proposed weaknesses and questions.

Response to weakness 1 and question 1:

Due to space limitations, we had moved the full experimental results to the supplemental material. Some vital results such as the third dataset Combined-20 are not included in the main paper.

We do this to clearly present our methodology, as it involves two key parts: FG-AdaHAT framework (section 3) and fine-grained neuron importance (section 4), both of which need a thorough explanation.

We will revise our writing to give more space to accommodate vital experimental results in the camera ready version.

Response to weakness 2 and question 2:

We had already conducted a third dataset called Combined-20. Please find in supplemental material section B. Combined-20 is a much more complex and larger benchmark.

There are mainly three ways to construct continual learning datasets: permute the pixels, split by class, and combine different dataset sources. Combined-20 represents the third way by combining 20 distinct datasets commonly used in machine learning, each forming a separate task. The 20 datasets are: CIFAR-10, CIFAR-100, MNIST, SVHN, Fashion-MNIST, TrafficSigns, FaceScrub, NotMNIST, EMNIST Digits, EMNIST Letters, Arabic Handwritten Digits, Kannada-MNIST, Sign Language MNIST, Kuzushiji-MNIST, Food-101, Linnaeus 5, Caltech 101, EuroSAT, DTD, Country 211.

The details of the model architecture were included in the supplemental material as well, please refer to section F, part “Network Architecture”. Essentially we use a simple multi-layer perceptron (MLP) for simple dataset like Permuted MNIST, and ResNet-18 for complex datasets like Split CIFAR-100 and Combined-20. These model architectures are just enough for these datasets and the three datasets we used are enough to represent the three categories of continual learning datasets stated above. Due to limitations of our resources, we cannot afford larger settings.

Response to weakness 3:

Due to space limit, we had moved the full experimental results and detailed experiment settings to the supplemental material section C and F, respectively. In section F, we had provided detailed choices of network architecture, hyperparameters, optimizers, training epochs and batch sizes, GPUs, seeds, training times, and Python packages we used.

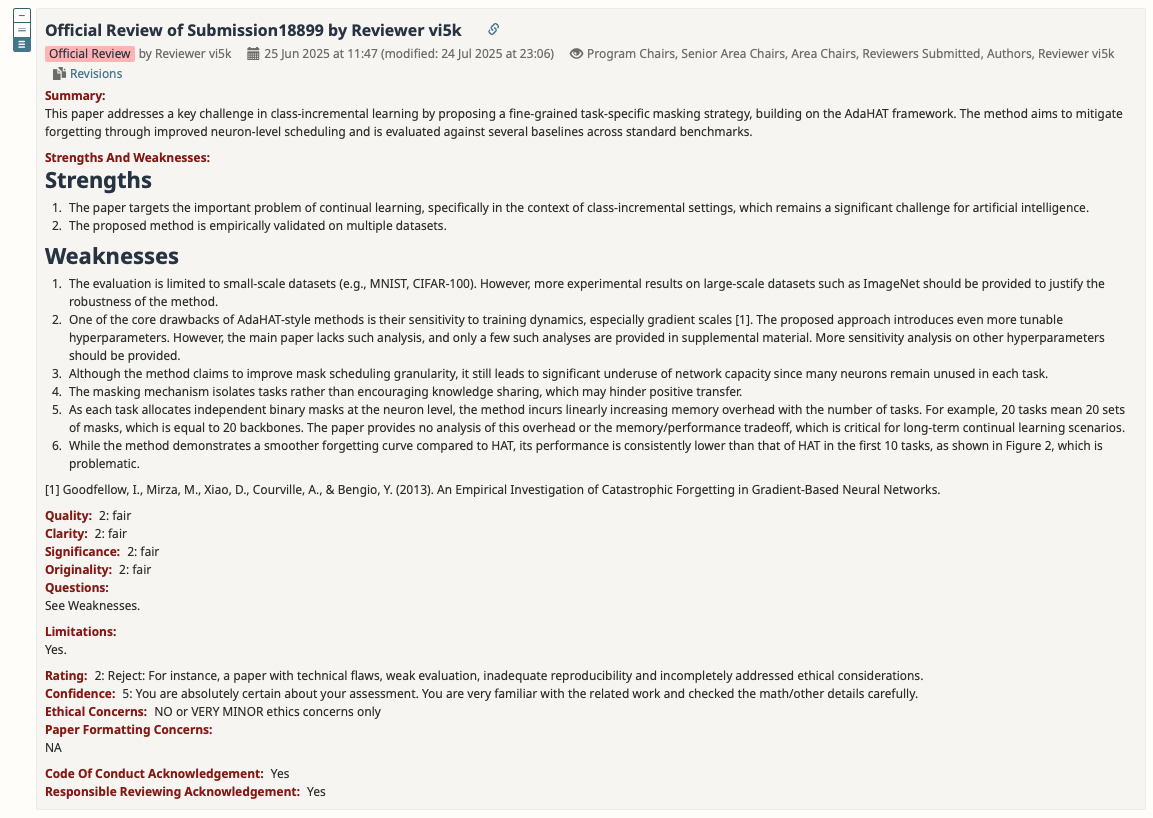

3 Reviewer vi5k

3.1 Rebuttal

Thanks for your valuable comments! Here are our responses to your proposed weaknesses and questions.

Response to weakness 1:

We had already conducted a third dataset called Combined-20. Please find in supplemental material section B. Combined-20 is a much more complex and larger benchmark.

There are mainly three ways to construct continual learning datasets: permute the pixels, split by class, and combine different dataset sources. Combined-20 represents the third way by combining 20 distinct datasets commonly used in machine learning, each forming a separate task. The 20 datasets are: CIFAR-10, CIFAR-100, MNIST, SVHN, Fashion-MNIST, TrafficSigns, FaceScrub, NotMNIST, EMNIST Digits, EMNIST Letters, Arabic Handwritten Digits, Kannada-MNIST, Sign Language MNIST, Kuzushiji-MNIST, Food-101, Linnaeus 5, Caltech 101, EuroSAT, DTD, Country 211.

Response to weakness 2:

Before the neuron importance measures are applied to guide our gradient adjustment, they are scaled to \([0,1]\) by min-max scaling to be not relevant or sensitive to value scales. Please refer to section 4. For example, the raw neuron importance measure Output Gradients (OG) is scaled to \([0,1]\) and then becomes the importance score to use.

All hyperparameters in addition to AdaHAT’s adjustment intensity \(\alpha\) in our work are two: base value \(b_L\) and the choice of \(Agg()\). Both of them are discussed in supplemental material section G (Hyperparameter Study). It is shown that they are not playing the key roles in our method and contribute little. Another potential hyperparameter you might be concerned about is the choice of fine-grained importance measure. For this, I have discussed in both section 5.2 (Result Analysis) and supplemental material section C (Full Experimental Results) that “overall performance is largely similar and consistent across all 9 different measures, with only minor differences”.

Response to weakness 3:

As we implied in section 2, architecture-based continual learning approaches have two rough categories: 1. pre-allocating a fixed network for future tasks; 2. dynamic incremental networks. What you proposed in weakness 3 is a general problem of all architecture-based approaches belonging to the former category. We argue that a pre-allocated fixed network is still somehow better than dynamic incremental networks as the latter often leads to linearly increasing memory cost.

Response to weakness 4:

Our method is based on HAT architecture. In HAT architecture, there are many neurons masked by multiple tasks (please refer to Figure 2 in original AdaHAT paper [49] which can illustrate this very clearly), which leads to network parameters sharing across tasks. Therefore, our method is not entirely parameter isolation though. The shared parameters encourage knowledge sharing and positive transfer.

Besides, this sharing is highly adaptive because the mask itself is learned as well (it is gated from learnable parameter called task embedding, please refer to HAT paper [39] section 2.2), which means FG-AdaHAT learns how much to share (the overlapping ratio of masks) and which part of network to encourage knowledge sharing.

Response to weakness 5:

- Our mask is neuron mask rather than parameter mask, which means each neuron rather than each parameter corresponds to a mask value;

- Our mask for task is stored as binary values (0 or 1) after training the task, which takes significantly less memory than float values.

These two facts suggest that our memory overhead is much less impactful. Therefore, in the example raised by you, 20 tasks do correspond to 20 sets of masks, but take up far less than 20 backbones’ space (that’s in parameter level rather than neuron level). Compared to many architecture-based approaches like Progressive Networks [36], DEN [53] and even the recent one Winning Subnetworks (WSN) [18] which uses parameter mask, FG-AdaHAT has achieved decent performance without incurring that much larger memory overhead.

This huge gap between neuron level and parameter level memory overhead is obvious. Nevertheless, we will add quantified analysis of memory cost in the camera ready version to give a formal analysis of this.

3.2 Response to weakness 6:

We had explained this in section 3, part “Task-specific Importance Scheduling”, that gradient adjustment mechanism inevitably updates parameters allocated to previous tasks and leads to a performance drop at the first few tasks, yet all we can do is to alleviate this early problem rather than fully address it. The importance scheduler is used to alleviate this problem (as stated in part “Task-specific Importance Scheduling”) and has shown its effectiveness. We have a whole paragraph stating this effectiveness, please refer to the last paragraph of section 5.2 (Result Analysis). Essentially, the FG-AdaHAT outperforms HAT earlier in the tasks than AdaHAT, and we even have result on Permuted MNIST that FG-AdaHAT outperforms HAT all the time. We had explained the reason in that paragraph.

On the other hand, the original AdaHAT [49] and our FG-AdaHAT focus more on long task sequences, which is what continual learning is more about. If we only want to pursue high performance on early few tasks, why don’t we just give up continual learning and simply use multi-task learning? We argue that it is still worth sacrificing a bit early performance in order to improve performance in the long run.

3.3 Other comments:

Just to correct in case of your confusion, our paper is focused on task-incremental learning (TIL) instead of class-incremental learning (CIL). Thank you! :)

References:

[18] Haeyong Kang, Rusty John Lloyd Mina, Sultan Rizky Hikmawan Madjid, Jaehong Yoon, Mark Hasegawa-Johnson, Sung Ju Hwang, and Chang D Yoo. Forget-free continual learning with winning subnetworks. In International Conference on Machine Learning, pages 10734–10750. PMLR, 2022.

[36] Andrei A Rusu, Neil C Rabinowitz, Guillaume Desjardins, Hubert Soyer, James Kirkpatrick, Koray Kavukcuoglu, Razvan Pascanu, and Raia Hadsell. Progressive neural networks. arXiv preprint arXiv:1606.04671, 2016.

[39] Joan Serra, Didac Suris, Marius Miron, and Alexandros Karatzoglou. Overcoming catastrophic forgetting with hard attention to the task. In International conference on machine learning, pages 4548–4557. PMLR, 2018.

[49] Pengxiang Wang, Hongbo Bo, Jun Hong, Weiru Liu, and Kedian Mu. Adahat: Adaptive hard attention to the task in task-incremental learning. In Joint European Conference on Machine Learning and Knowledge Discovery in Databases, pages 143–160. Springer, 2024.

[53] Jaehong Yoon, Eunho Yang, Jeongtae Lee, and Sung Ju Hwang. Lifelong learning with dynamically expandable networks. arXiv preprint arXiv:1708.01547, 2017.

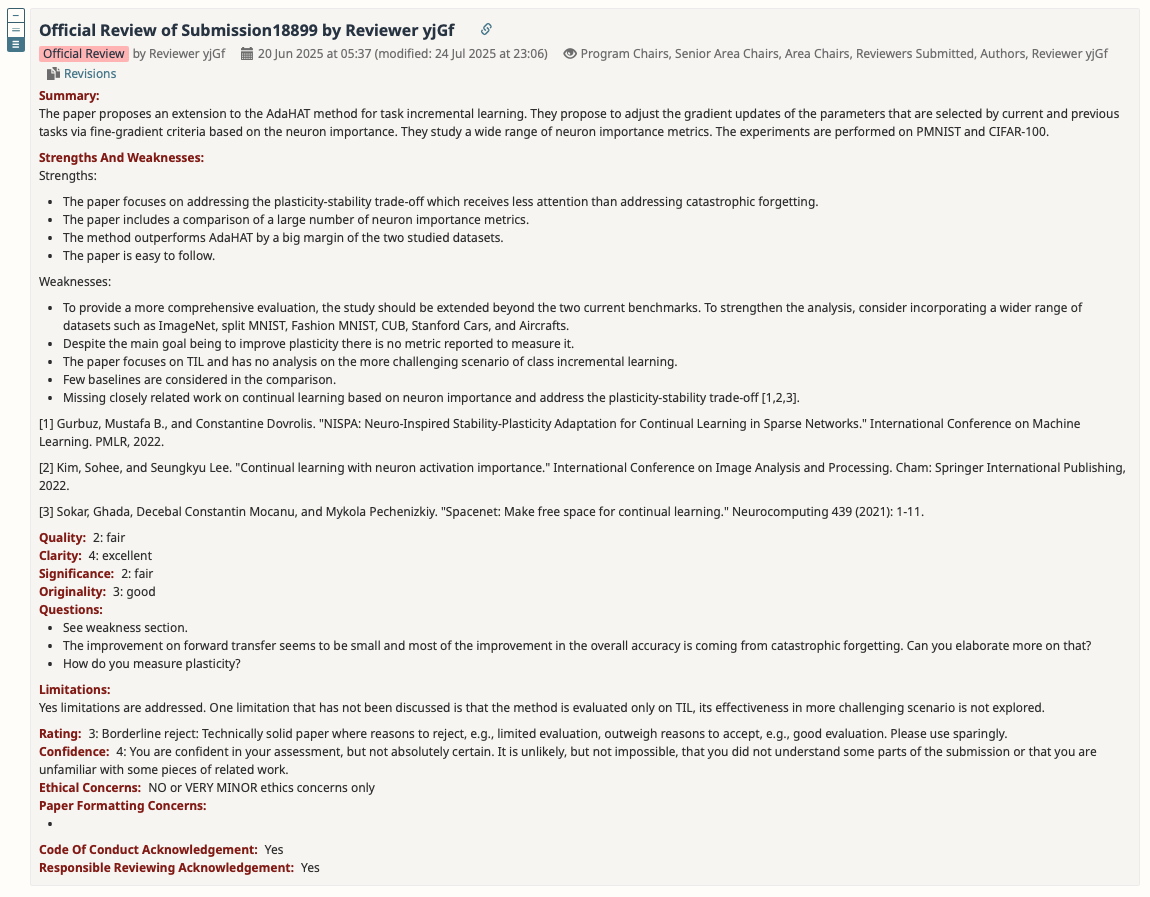

4 Reviewer yjGf

4.1 Rebuttal

Thanks for your valuable comments! Here are our responses to your proposed weaknesses and questions.

Response to weakness 1:

We had already conducted a third dataset called Combined-20. Please find in supplemental material section B. Combined-20 is a much more complex and realistic benchmark.

There are mainly three ways to construct continual learning datasets: permute the pixels, split by class, and combine different dataset sources. Combined-20 represents the third way by combining 20 distinct datasets commonly used in machine learning, each forming a separate task. The 20 datasets are: CIFAR-10, CIFAR-100, MNIST, SVHN, Fashion-MNIST, TrafficSigns, FaceScrub, NotMNIST, EMNIST Digits, EMNIST Letters, Arabic Handwritten Digits, Kannada-MNIST, Sign Language MNIST, Kuzushiji-MNIST, Food-101, Linnaeus 5, Caltech 101, EuroSAT, DTD, Country 211.

Response to weakness 2 and question 3:

We had stated in both section 5.1 (Experimental Setup) and supplemental material section E (Definition of Evaluation Metrics) that the metric forward transfer (FWT) is for measuring plasticity.

Our main goal is to achieve better average performance over all tasks. This is achieved by better balancing the stability and plasticity. Therefore, our goal is not to improve plasticity alone but to improve both. (Note that improving plasticity alone often leads to poor stability because of the stability-plasticity dilemma. )

Response to weakness 3:

Unfortunately, the FG-AdaHAT is based on HAT architecture. HAT architecture is applicable to task-incremental learning (TIL) only. The HAT architecture requires task ID information of the input data to choose the corresponding mask, which only TIL can offer [39]. In fact, most similar architecture-based approaches require task information preemptively, which makes them cannot be applicable to other scenarios as well.

Response to weakness 4:

We are going to introduce more baselines to compare later in the camera ready version.

Response to weakness 5:

We will add them later. By the way, the first citation “NISPA” mentioned by you is already included in the related work section as a reference to how architecture-based approaches addresses the stability-plasticity trade-off. See reference [16].

Response to question 2:

We had stated in section 5.2 (Result Analysis) that “many FG-AdaHAT variants improve BWT over AdaHAT while sacrificing only a small amount of FWT”. As you have already discovered, the metric forward transfer (FWT) hardly improves in our method but decreases. That is because stability (measured by BWT) and plasticity (measured by FWT) is a trade-off as we mentioned many times in the paper. It often gets stuck in the stability-plasticity dilemma where improving one often leads to decreasing the other. The best case is improving both but cannot be achieved in most cases. Our results which improve a lot of stability while sacrificing a little bit plasticity, is still good. (And we do have some cases improving both, as stated in section 5.2. ) What matters most to continual learning is a balance of stability and plasticity instead of strictly improving both.

This trade-off has been discussed a lot in continual learning research community. Please check out this survey paper [48] I’ve referred to if you are interested.

References:

[16] Mustafa Burak Gurbuz and Constantine Dovrolis. Nispa: Neuro-inspired stability-plasticity adaptation for continual learning in sparse networks. arXiv preprint arXiv:2206.09117, 2022.

[39] Joan Serra, Didac Suris, Marius Miron, and Alexandros Karatzoglou. Overcoming catastrophic forgetting with hard attention to the task. In International conference on machine learning, pages 4548–4557. PMLR, 2018.

[48] A comprehensive survey of continual learning: Theory, method and application. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024.

5 Advice That May Be Useful

- Verbose and lack focus. Make it concise. Allocate more space to experiment section.

- Add profiling results of different importance measures.

- More advanced datasets and architectures. Follow the trends in deep learning.

- More baselines.

- Extend to CIL.

- Sensitivity analysis?

- Add memory cost analysis.

- Move essential parts to main paper as much as possible. Supplemental material might not be published.