My takeaway series follow a Q&A format to explain AI concepts at three levels:

Anyone with general knowledge can understand them.

For anyone who wants to dive into the code implementation details of the concept.

For anyone who wants to understand the mathematics behind the technique.

Diffusion model is a type of generative models that learn to generate data by simulating the process of diffusion. It is also neural network but added a process of gradually denoising data, where each step of denoising is learned by a neural network.

Input:

- A random noise fixed-length data (like image) \(\mathbf{x}_T\), typically drawn from a Gaussian distribution.

Output:

- A generated data sample that resembles the training data, such as an image \(\mathbf{x}_0\) in the same shape as the input.

Here the forward pass refers to the process of training the diffusion model.

A noise \(\mathbf{x}_T\) is gradually denoised to the output data \(\mathbf{x}_0\) over a series of time steps \(t = T, T-1, \ldots, 1\), where \(T\) is the total number of time steps. The denoising process that transforms \(\mathbf{x}_t\) to \(\mathbf{x}_{t-1}\) is a neural network, which takes the current noisy data \(\mathbf{x}_t\) as input, and outputs a less noisy version \(\mathbf{x}_{t-1}\). The number of time steps \(T\) is a hyperparameter.

This process is typically considered as a Markov chain. Markov chain is a mathematical system that undergoes transitions from one state to another on a state space. The state space here is the data space, and the transition from one state to another is the denoising neural network.

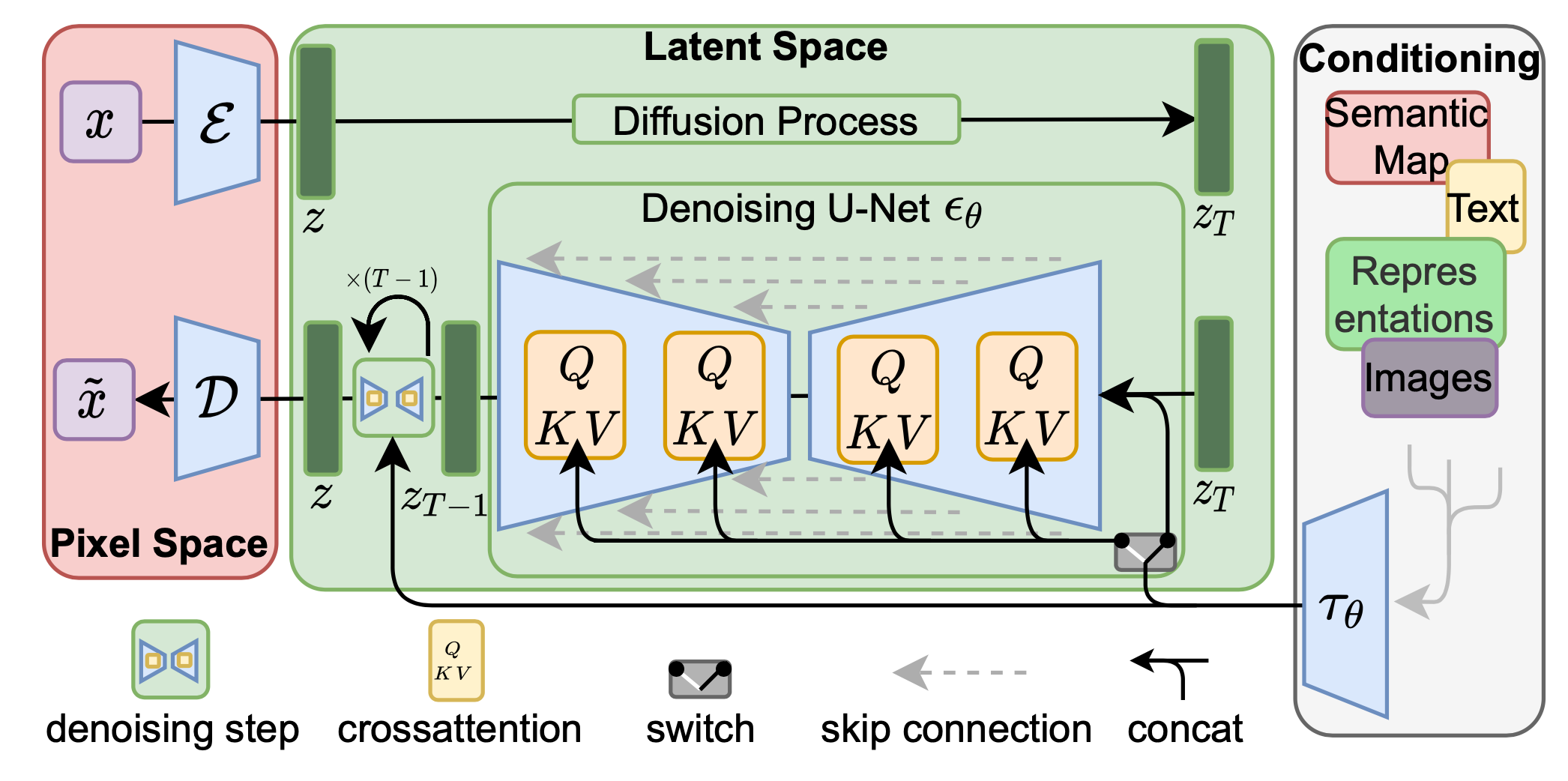

The core architecture of diffusion model is the denoising neural network. It can be any neural network that maps a sample to the output with the same shape. The most commonly used architecture is the U-Net, which is a type of convolutional neural network (CNN) that has an encoder-decoder structure with skip connections.

The denoising neural network at each step shares the same architecture and weights.

Diffusion models are trained by supervised learning each step of the denoising process.

To construct the training data, we start with a clean data sample \(\mathbf{x}_0\) from the training dataset, and then gradually add noise to it over a series of time steps \(t = 1, 2, \ldots, T\), that is \(\mathbf{x}_1, \cdots, \mathbf{x}_T\). The amount of noise added at each time step is controlled by a noise schedule. These noisy samples \(\mathbf{x}_t\) at each time step \(t\) are used as the input to the denoising neural network, and the target output is the less noisy sample \(\mathbf{x}_{t-1}\).

The model is indeed trained to learn the random noise added at each step, but it does not learn to generate randomly, but based on the \(\mathbf{x}_t\) at each step.