My takeaway series follow a Q&A format to explain AI concepts at three levels:

Anyone with general knowledge can understand them.

For anyone who wants to dive into the code implementation details of the concept.

For anyone who wants to understand the mathematics behind the technique.

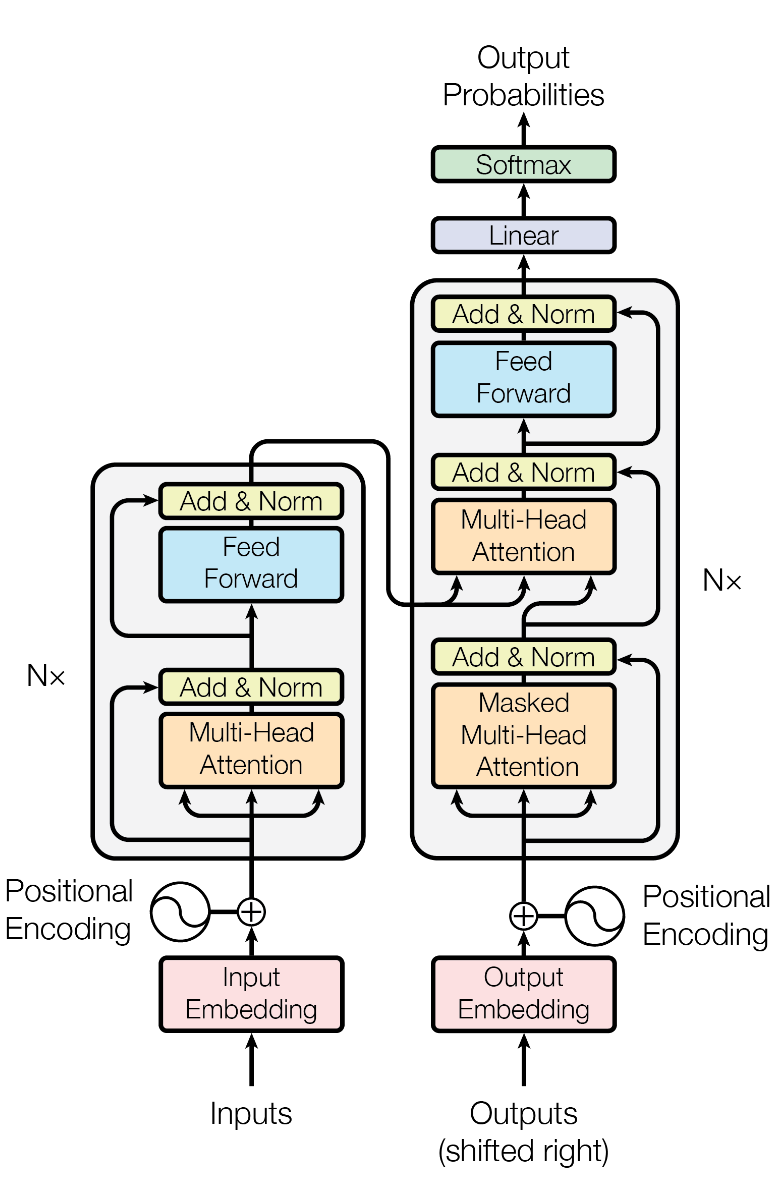

Transformer is a type of neural network architecture for handling sequential data like text. It is an encoder-decoder architecture.

Transformer was the work (Vaswani et al. 2017) from a team of eight researchers at Google in 2017.

The background was the need to overcome the limitations of RNNs and LSTMs. They had issues with dealing with long sequence data, even LSTM is proposed to catch long-range dependencies. The famous self-attention mechanism was proposed in this paper first to address these problems, and Transformer was the first architecture to use it. The paper is titled “Attention is All You Need”, reflecting that attention serves as the main part of the architecture. This very unique paper with such an dramatic title has broken a record of being the most cited paper in the field of AI and machine learning.

Transformer made neuron networks for sequential data very powerful, make natural language processing (NLP) comes to a new level. The architecture was then developed into a family of models, such as BERT, GPT, T5, and many more. It is the foundation for most modern large language models (LLMs), which are widely used in various applications.

The architecture was also adapted for other types of data (modal), such as images and audio, leading to breakthroughs in computer vision and speech recognition. It paved the way for multimodal models that can process and generate content across different data types.

Transformer is an encoder-decoder architecture, so it follows the inputs and outputs of the encoder-decoder. However, Transformer is specifically designed for handling sequences of symbol tokens (words, subwords, or characters) in natural language processing tasks.

Encoder Inputs:

- A sequence of symbol tokens (words, subwords, or characters) \(x_1, x_2, \ldots, x_n\) of any length.

Encoder Outputs:

- A sequence of embeddings \(\mathbf{h}_1, \mathbf{h}_2, \ldots, \mathbf{h}_n\) for each input token, each embedding is a fixed-size vector.

Decoder Inputs:

- A special start-of-sequence data

<SOS>to indicate the beginning of the output sequence.

Decoder Outputs:

- A single output token \(y\) which can feed itself back to the input sequence as \((x_1, x_2, \cdots, x_n, y)\) to generate the next value step by step (autoregression) until it produces the end-of-sequence

<EOS>.

Although Transformer can process variable-length sequential data, it doesn’t work like RNN or LSTM, which process data in a recurrent way. Instead, Transformer processes the entire sequence at the same time using the self-attention mechanism.

The main parts of Transformer (both encoder and decoder) are composed of multiple (\(N\times\)) blocks of multi-head self-attention layers and feed-forward neural networks, along with layer normalization and residual connections. Details of these components are explained in the following questions.

Input Embedding: The input tokens \(x_1, \cdots, x_n\) are first converted into dense vector representations (embeddings) \(\mathbf{X} = (\mathbf{x}_1, \cdots, \mathbf{x}_n) \in \mathbb{R}^{n\times d_\text{model}}\) using an embedding layer.

Positional Encoding: Since Transformer does not have a built-in notion of sequence order, positional encodings \(\text{PE}(1), \cdots, \text{PE}(n)\) provide information about the position of each token in the sequence. Are added to the input embeddings:

\[\mathbf{z}_i = \mathbf{x}_i + \text{PE}(i) \in \mathbb{R}^{d_\text{model}}\]

Encoder Stack: The \(\mathbf{Z} = (\mathbf{z}_1, \cdots, \mathbf{z}_n) \in \mathbb{R}^{n\times d_\text{model}}\) s are passed through a stack of encoder layers. Each encoder layer consists of:

- Multi-head self-attention: output \(\mathbb{R}^{n\times d_\text{model}}\)

- Layer normalization and residual connections are used to stabilize training and improve convergence.

- Feed-forward neural network: output \(\mathbb{R}^{n\times d_\text{model}}\)

- Layer normalization and residual connections are used to stabilize training and improve convergence.

- Please note that because the dimension of input and output of each layer keeps the same, we can do residual connections, and stack multiple layers too. Eventually, we get the output of the encoder stack: \(\mathbf{H} = (\mathbf{h}_1, \mathbf{h}_2, \ldots, \mathbf{h}_n) \in \mathbb{R}^{n\times d_\text{model}}\).

Output Embedding: A special start-of-sequence data

<SOS>to indicate the beginning of the output sequence are converted into dense vector representation (embedding) \(\mathbf{x}'_1 \in \mathbb{R}^{d_\text{model}}\) using an embedding layer.Decoder Stack: The \(\mathbf{x}'_1\) is passed through a stack of decoder layers. Each decoder layer consists of:

- Masked multi-head self-attention: output \(\mathbb{R}^{d_\text{model}}\)

- Layer normalization and residual connections are used to stabilize training and improve convergence.

- Multi-head attention over the encoder’s output (cross-attention): output \(\mathbb{R}^{d_\text{model}}\)

- Layer normalization and residual connections are used to stabilize training and improve convergence.

- Feed-forward neural network: output \(\mathbb{R}^{d_\text{model}}\)

- Layer normalization and residual connections are used to stabilize training and improve convergence.

Output Layer: The final output \(\mathbf{y} \in \mathbb{R}^{d_\text{model}}\) from the decoder is passed through a linear layer followed by a softmax activation function to produce a probability distribution over the output vocabulary for each position in the output sequence. The token \(y\) with the highest probability is selected as the output token at that position.

The entire forward pass is repeated autoregressively, feeding the previously generated token back into the decoder until the end-of-sequence token <EOS> is produced or a maximum length is reached.

The embedding layer converts discrete tokens (words, subwords, or characters) into dense vector representations (embeddings).

- Each token \(x\) in the input sequence is first mapped to a unique index based on a predefined vocabulary dictionary \(V\).

- The index are then used to look up corresponding vector \(\mathbf{e} \in \mathbb{R}^{d_\text{model}}\) in a pool of embeddings for different tokens.

- The embeddings have the same lengths, forming a matrix \(\mathbf{E} = \{\mathbf{e}_v\}_{v=1}^{|V|} \in \mathbb{R}^{|V| \times d_\text{model}}\). They are learnable model parameters.

Since Transformer does not have a built-in notion of sequence order, positional encodings can provide information about the position of each token in the sequence.

Position encodings are the same size as the embeddings, so that they can be added together:

\[ \text{PE} = (\text{PE}(1), \cdots, \text{PE}(n)) \in \mathbb{R}^{n \times d_\text{model}} \]

Rather than a single value for each position, each position is represented by a vector of the same dimension as the embeddings, \(d_\text{model}\). This provides a richer representation of positional information.

The vanilla positional encoding uses sine and cosine functions of different frequencies to generate unique positional encodings for each position in the sequence:

\[ \text{PE}(pos, 2i) = \sin\left(\frac{pos}{10000^{2i/d_\text{model}}}\right) \]

\[ \text{PE}(pos, 2i+1) = \cos\left(\frac{pos}{10000^{2i/d_\text{model}}}\right) \]

The position embedding is purely determined by the position index, not learnable.

In self-attention, the query, key, and value vectors are all derived from the same input sequence \(\mathbf{Z} \in \mathbb{R}^{n \times d_\text{model}}\):

\[ \mathbf{Q} = \mathbf{Z} \mathbf{W}_Q, \mathbf{K} = \mathbf{Z} \mathbf{W}_K, \mathbf{V} = \mathbf{Z} \mathbf{W}_V \]

\(\mathbf{W}_Q, \mathbf{W}_K, \mathbf{W}_V\) are learnable weights. The attention output is computed as: \[ \text{Attention}(\mathbf{Q}, \mathbf{K}, \mathbf{V}) = \text{softmax}\left(\frac{\mathbf{Q} \mathbf{K}^T}{\sqrt{d_k}}\right) \mathbf{V} \]

Please note the dimension of \(\mathbf{Q}, \mathbf{K}, \mathbf{V}\) are all the same \(d_\text{model}\) in Transformer. Please see AI concept takeaway: Attention for details of the attention mechanism.

Transformer uses multi-head attention. It splits the dimension of query, key, and value vectors into multiple smaller sub-vectors:

\[\mathbf{Q}_h = \mathbf{Z} \mathbf{W}_{Q_h}, \mathbf{K}_h = \mathbf{Z} \mathbf{W}_{K_h}, \mathbf{V}_h = \mathbf{Z} \mathbf{W}_{V_h}, h = 1, \cdots, H\]

Here the dimension of each sub-vector is \(d_h = d_\text{model} / H\). Each head has its own set of weight matrices. The attention output for each head is computed independently:

\[\text{Attention}_h = \text{Attention}(\mathbf{Q}_h, \mathbf{K}_h, \mathbf{V}_h), h = 1, \cdots, H\]

Its dimension is still \(d_h = d_\text{model} / H\). The outputs of all heads are then concatenated and linearly transformed to produce the final output of the multi-head attention layer:

\[\text{MultiHead}(\mathbf{Q}, \mathbf{K}, \mathbf{V}) = \text{Concat}(\text{Attention}_1, \cdots, \text{Attention}_H) \mathbf{W}_O\]

where \(\mathbf{W}_O\) is a learnable weight matrix. The linear transformation is necessary, because concatenation is a dumb operation that just stacks the vectors together and does not mix the information from different heads.

The input embeddings simply have no information about the relationships between different tokens in the sequence. Each embedding only represents the information of the token itself (and the encoded position), without any semantic context. The self-attention layers allow the model to dynamically focus on relevant parts of the input sequence when processing each token, enabling it to capture dependencies and contextual information effectively.

The assumption is that different heads can learn to focus on different aspects of the input sequence. For example, one head might learn to focus on syntactic relationships, while another head might focus on semantic relationships. By having multiple heads, the model can capture a richer set of dependencies and interactions between tokens in the sequence.